Human Dignity: The Hidden Cost of AI

How deeply do we have to delve into AI before we begin encountering questions about good and evil? In my view, not very far - just as in many other areas of human endeavor.

Consider a simple thought experiment. Imagine you meet a person who can, at any moment, complete a complex fill-in-the-blanks exam in your field of expertise with near-perfect accuracy - enough to earn the highest certification - and who can do so without relying on multiple-choice hints. Would you assume that this person has a strong understanding of the field?

Perhaps. But it’s also possible that they simply memorized the correct answers and don’t truly understand the subject at all.

In practice, however, most fields are far too complex for someone to memorize the answers to every possible fill-in-the-blank question. It’s far more efficient to actually learn the material - through study, experience, and by developing an internal understanding of how things work. That, after all, is the reason such tests exist: they are meant to probe whether someone has genuinely grasped the underlying knowledge, not merely memorized isolated responses.

Technology operates under different constraints than humans. A system could store a database containing millions of questions and answers and query it through a simple mobile app to achieve excellent scores on such tests. But that would not amount to software-assisted understanding of the field.

If you were an airline pilot, you certainly wouldn’t want your co-pilot to possess that kind of “knowledge” of flying.

That is why it has long been sensible to regularly change the wording of important fill-in-the-blank exam corpora - to make simple answer-lookup cheating impossible.

Then large language models (LLMs) introduced a different approach, in which changes in wording are no longer an obstacle. If every word in a language is assigned a numerical ID, a model can learn a very large mathematical formula that predicts the most likely missing word given the surrounding text. No matter how the sentence is phrased, the model can attempt to infer the missing word that best fits.

An LLM is, in essence, a large formula compiled from a huge spreadsheet of sentences, with missing words in one column and the correct words in another. This is called the training data. It’s not a perfect transformation - only an approximate one. But in this new representation of knowledge, the exact wording no longer matters.

Internally, an LLM has nothing to do with a chat: it’s not about questions and answers, but rather about filling in missing words. When you ask an AI chatbot a question, it reformulates it internally as a sentence with missing words and then fills them in based on the formula.

Just to give you a visual impression of what such a formula might look like, imagine the sentence “Winter is cold,” which the software sees as a sequence of words: X = Winter, Y = is, Z = cold. If we know the numerical IDs of “Winter” and “is,” we could use the formula to calculate a likely ID for the word in Z.

The spreadsheet with training data is trillions upon trillions of rows long, but if it were short, the formula might visually look something like sin(4X + 2Y + 10Z + 5) = 0. Many of us solved such equations in high school and can imagine how to calculate the missing value if we know the other two.

In reality, the formula would use a slightly different function than a sine function, but most importantly it would be much longer and more deeply nested, since it needs to work not just for “Winter is cold,” but also for most of the other rows in the training spreadsheet. Conceptually, however, it’s not rocket science - this is what I’m trying to illustrate here.

This kind of “artificial understanding” is a lot like a strategy many of us use when we can’t remember a particular test answer: “If the sentence starts with ‘Camel,’ the correct answer is ‘sand.’” The mathematical model can capture much more complex “hacks.”

In this analogy, numbers such as 4, 2, 10, and 5 would be called parameters, and the number of consecutive words the model focuses on (X, Y, Z) is known as the context window. Modern frontier LLMs contain more than a trillion parameters. Even calculating the next word can therefore be computationally demanding, but the real expense lies in finding the right parameter values through computational brute force rather than through any intelligent search.

That process requires immense computing power and energy; sometimes it even requires its own power plant. Suddenly, our training spreadsheet has been converted into a large and immensely computationally expensive formula that can handle different phrasings of the facts contained in the training data.

The training data can now be discarded - or, better yet, kept for calculating the next version of the formula. Researchers constantly come up with improvements to the underlying model architecture, and the training data itself becomes outdated fairly quickly. In practice, the ideal setup might involve one power plant for training, another for serving customers, and yet another for research and innovation. Good.

There is one neat trick, however, that people often use to begin their explanations of LLMs - and which I have deliberately postponed until now. The formula is so well constructed that if you always let it fill in the next word based on, say, the 10 preceding words, you can generate a sequence of words as long as you wish.

With large enough context windows, it can produce clever sentences about your field of expertise that sound almost as if they reflect a PhD-level understanding. That is what gives the technology its “wow” effect - especially if you tweak it to appear like a chat, where part of the conversation is provided by the user and part is generated by the formula.

Some individuals can even fall in love with such a formula, under the illusion that it has human consciousness.

But let us return to our earlier question about understanding. Imagine again that you are the airline pilot who discovers that your co-pilot carries a device in his pocket that gives him a computer-aided “understanding” of flying. Does it really change the situation if the gadget can now deal with different wording? I don’t think so.

Yet I am a software engineer, and in my field many companies now mandate that my peers use such tools whenever possible. In the past, when developers searched for solutions in various internet discussions, managers would raise their eyebrows and start talking about proper skill sets. It didn’t stop us then, and it wouldn’t stop us now if they tried to prohibit the use of these tools. Nor would it stop us from using our own brains when they are more effective than LLMs - because objective results matter in the end, no matter what the guidance is.

That said, it is also true that even a person with a PhD-level understanding will appreciate a gadget they can ask questions and receive meaningful answers from, especially when their colleagues fail to provide them. These days the tools can also handle many routine tasks quite well.

But it is also true that everyone fears the specter of a software engineer (or a co-pilot) whose understanding comes only from having a gadget in their pocket capable of answering fill-in-the-blank tests at an expert level, independent of wording.

This is about the point in this article where greed and inhumane motivations enter the picture at many different levels. Let us try to explore them one by one.

First, if automated AI tools handle most routine tasks, a senior software engineer may be left only with the most cognitively demanding work - problems AI cannot solve. Yet humans are not designed to sustain such intensity for eight hours a day. It is like telling a sprinter that everything in his life will be taken care of except the one thing he excels at - sprinting. The result is exhaustion and burnout, since the human body cannot easily be upgraded the way hardware can. Observations like this will likely apply to many - perhaps most - white-collar professions in the future. For now, however, few people seem concerned. If we fail to address the issue, we may soon find ourselves in a situation not unlike that of factory workers a hundred years ago. But God did not create men as mere human resources; He created us in His own image.

At the other end of the spectrum, junior engineers face a different risk. They may not be given enough time to reflect on the deeper relationships and consequences within their craft - the kind of understanding that grows when one struggles with problems and learns to figure things out independently. If short-term productivity gains are constantly prioritized, they may never have the chance to grow into senior engineers and may remain merely operators of automated tools. That would squander talent and could eventually lead to an intellectual and creative plateau from which it would be difficult to recover.

This concern does not apply only to software engineering; it is simply the first field in which these risks have become clearly visible. And to be honest, we cannot really say that we live in an age of intellectual and creative mountain ranges. At best, we are already living among rolling plains - though few of us realize it.

The training data behind LLMs were built upon the work of people with a deep understanding of their fields. We should not risk squandering this creative richness and turning our civilization into a landscape of shallow, leveled plains - living only on the legacy of its great ancestors, who may one day appear like demigods of intellect and dignity beside our diminished contemporaries.

As a human being and a practicing Catholic, my primary motivation at work is to serve my neighbors. In this context, that means helping customers and colleagues live even a tiny bit more purposeful lives in accordance with what I recognize as God’s will. I do not experience God’s will as a set of traditional values, general wisdom, or ideology. Rather, it often comes to me as small, concrete hints and gifts from Him, for He is a living God.

The most innovative and creative ideas that have ever occurred to me do not come from my own initiative or from any particular way of life. They simply happen to me - almost like strange coincidences - undeserved gifts. I can only receive and understand them because, in limited ways, I am able to empathize with other human beings, since these small inspirations are always meant as gifts for others. Recognizing this fills my life with purpose.

In some sense, it works this way for every human being. You may call it randomness, and you may choose not to empathize and receive, but what Catholic doctrine describes is about as real as what physics textbooks describe.

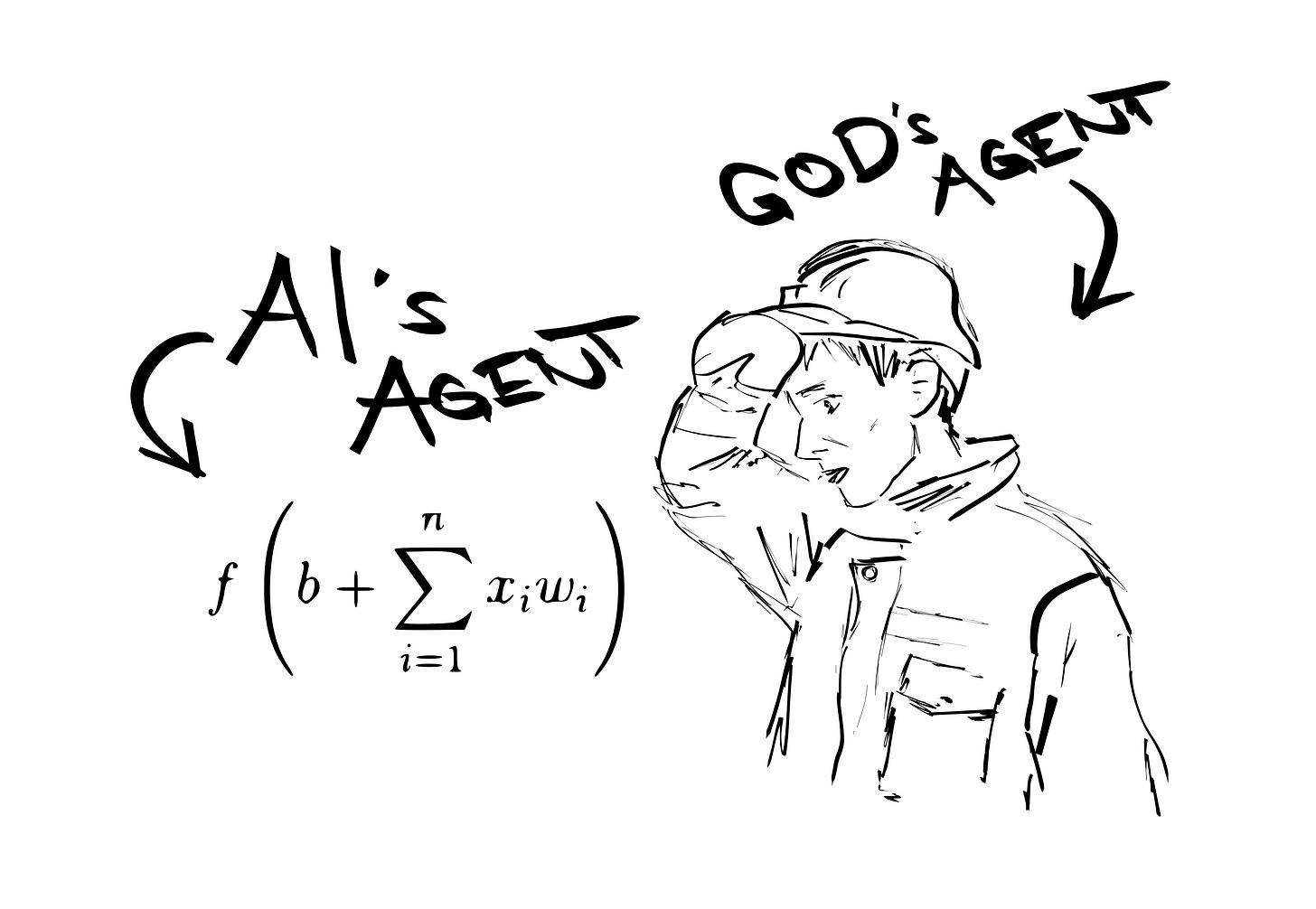

We are not robots; we are God’s agents. That is our specialty in the labor market - our added value. You might also call this teamwork or a customer-centric attitude. But this is simply what we do as human beings. We do not merely shovel data from one heap to another in an attempt to maximize selected metrics.

By saving us time on routine work, LLMs might free our hands to be more creative and inventive in that uniquely human space. But that will only happen if the time we save is reclaimed for that purpose. If it is, it might bring us closer, in more direct ways, to our customers and colleagues - making us less narrowly technical, less isolated behind technical concerns, and more broadly engaged. This is what some of the designers of these tools really intended.

Unfortunately, this is not what is currently happening. There is substantial evidence that big tech is not pursuing a vision of an economy in which we serve one another in ever more perfect ways, but rather one in which we compete to accumulate power. This is no surprise, since the struggle for power lies at the heart of Marxism - the only rational path to follow if one assumes that there is no God - and this new “elite” is full of cultural analphabets.

What I describe below is not a transition to another stage of market capitalism. The destination is not a prosperous society, but a poor one in which a small group of the miserable imposes totalitarian rule over a population that is poorer and even more miserable, yet fully dependent on it to receive just enough to barely cover its basic needs. We have seen this dynamic many times before - one that appears almost as robust as a natural law.

To make things clearer, let me describe an alternative future - one that may yet turn out to be the real future, only somewhat delayed. There is still hope.

One of the most attractive aspects of studying information technology is the ability to manage enormous complexity through layers of abstraction. For example, I can buy a couple of chips and build a small device to monitor temperature and humidity at home. To do that, I need documentation for the chips, and I also need a conceptual understanding of how they are built internally - but not in extreme detail. Their manufacturer has agreed to follow certain common conventions; we can call them contracts.

I trust the manufacturer, and because I conceptually understand how the chips work, I can also make reasonable guesses about the conditions under which they might fail. That is an example of a layer of abstraction.

Similarly, when I buy a computer, I can install the same software that runs on many other computers, even though they may contain wildly different processors and components. Thanks to another layer of abstraction, it does not matter what specific processor or components are inside. There is another “contract” - another abstraction layer - that allows me to control the computer in essentially the same way. Layers of abstraction allow us to accomplish almost effortlessly tasks that would otherwise be unbearably complex - something approaching rocket science.

It is quite tempting for any engineer to build something on top of LLM models, yet the proper abstraction layer is not really there yet. For instance, we know that LLMs power modern automatic translators and are capable of capturing many of the nuances of language remarkably well. A specialized, well-trained model could help me build practical tools for writers - tools that would help them understand meaning, etymology, and connotation, all presented in an informative graphical way.

Such an LLM could be relatively small and not terribly intelligent or versatile. I could run it on my own laptop; there would be no need to build a new power plant to operate it in a data center. It would only need to be stable and reliable.

Yet somehow nobody seems to produce such a model. I could rely on one of the large LLM providers, but their systems are too slow, too general, too constrained, and too unstable for this purpose. They boast that what works today may work quite differently tomorrow. Most of what I would need to know about the training data, internal architecture, and other crucial aspects is kept secret.

For instance, I have no idea what kind of licensing fees I might face in the future. These companies are still burning through billions of dollars in investment, and nobody really knows what the true operating costs are for a use case like mine. They are building something enormous all at once, fueled by vast amounts of capital, and there seems to be little room to help someone like me build a business incrementally - step by step, guided by customer feedback.

Similarly, LLMs could help address the frustration users face when navigating many contemporary applications, with their endless buttons, text fields, and controls. The result is often a usability mess - and beneath the surface, the technology can be even messier. This seems like a perfect opportunity for a small, well-crafted LLM designed to let users control applications through natural language, while clearly confirming whether their intentions have been understood correctly.

Once again, I find myself stuck filling my applications with endless buttons and text fields, while the major LLM providers appear to be racing to build dozens of new power plants to support their vast, newly built data centers. Why?

I could go on and on with dozens of use cases, but let me mention one last example. For this one, I would gladly rely on the large LLM providers, with their vast data centers and massive models. It is somewhat of a journalistic use case: automatically sifting through streams of new data to find whatever topics my users have said they care about.

But here is another complication. Keep in mind that these enormous models are trained to handle trillions upon trillions of question–answer pairs. Adding new information requires careful testing to ensure that the model has not “broken” what it previously learned. Recomputing such a massive body of data again and again might indeed require the output of a nuclear power plant - or ten, or twenty of them.

So how fresh can the data inside these models really be? Can such periodic recalculation ever become profitable? That remains something of a mystery. We hear that there has been a lot of promising research progress - and that more investment is needed.

Why are they doing this? Isn’t it somewhat irrational? The answer is simple: only by building an enormous formula that encompasses all fields of human endeavor can they hope to replace white-collar workers altogether, with their weak bodies and inconvenient conscience. Senior or junior, human workers simply don’t scale.

Obviously, this could only work if humans are not God’s agents and if Catholic doctrine is merely a fairy tale. It should also be said that there have recently been numerous reports that many actors in the markets are speculating against the success of the major LLM providers. But could big tech succeed at least halfway? If it did, the consequences would likely be severe: a huge wave of unemployment, a sharp rise in energy prices, and a steep plunge in consumer demand, as unemployed white-collar workers would have little money to spend.

The report that recently shook Wall Street - The 2028 Global Intelligence Crisis (often referred to as the Citrini Report or the “AI Doomsday Report”), published by Citrini Research in February 2026 - went viral for outlining a “heads-I-win, tails-you-lose” dilemma in which both the success and the failure of AI could lead to severe economic downturns.

We will likely all be poorer soon. The question is whether our children will end up with their intellects permanently leveled. That question is now just dozens of gigawatts of power output away. Will they live with the dignity of well-cared-for cattle, owing everything they have to a new and powerful elite?

It is not yet clear whether this is science fiction or not. But what is certain is that these CEOs from Silicon Valley truly mean what they say. And they can only mean it because Catholic engineers are no longer in charge.